Industry analyst research is invaluable in social-collaboration software selection

Let me warn you upfront that this article may appear to be self-serving. Alas, my (main) motive here is not to promote the value of our research. I am writing this in response to a question we get asked all the time: do you have any data to prove the ROI of your research / advice.

So, I dug into the data from our most recent industry survey on enterprise social-collaboration technology.

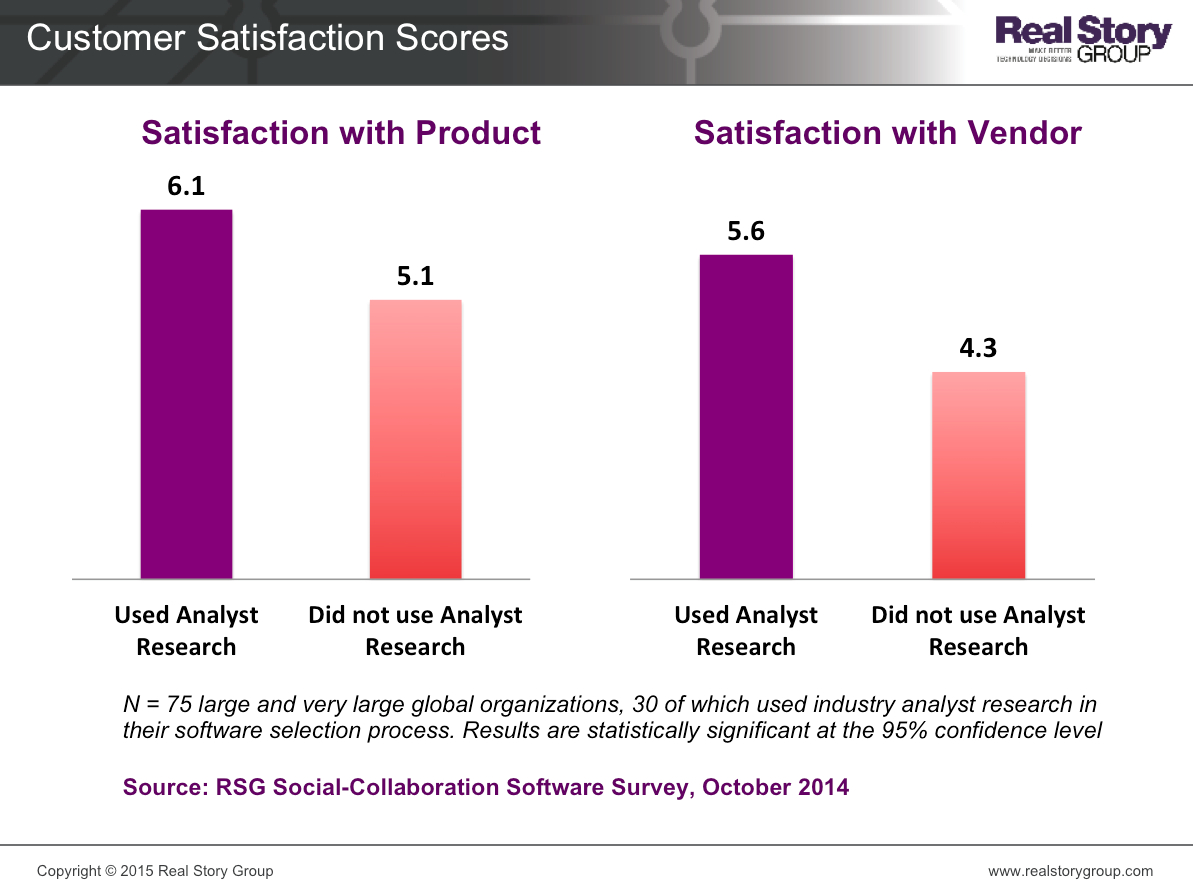

In the survey, we asked customers to rate how satisfied they are with

- the social-collaboration software products they are using (based on functionality and features) and

- the vendors of these products (based on their responsiveness and support)

In a separate question, we also asked customers about the different inputs that went into their technology selection process.

Here is what we found: Customers who used industry analyst research in their technology selection process were more satisfied with their software products and vendors than those who did not.

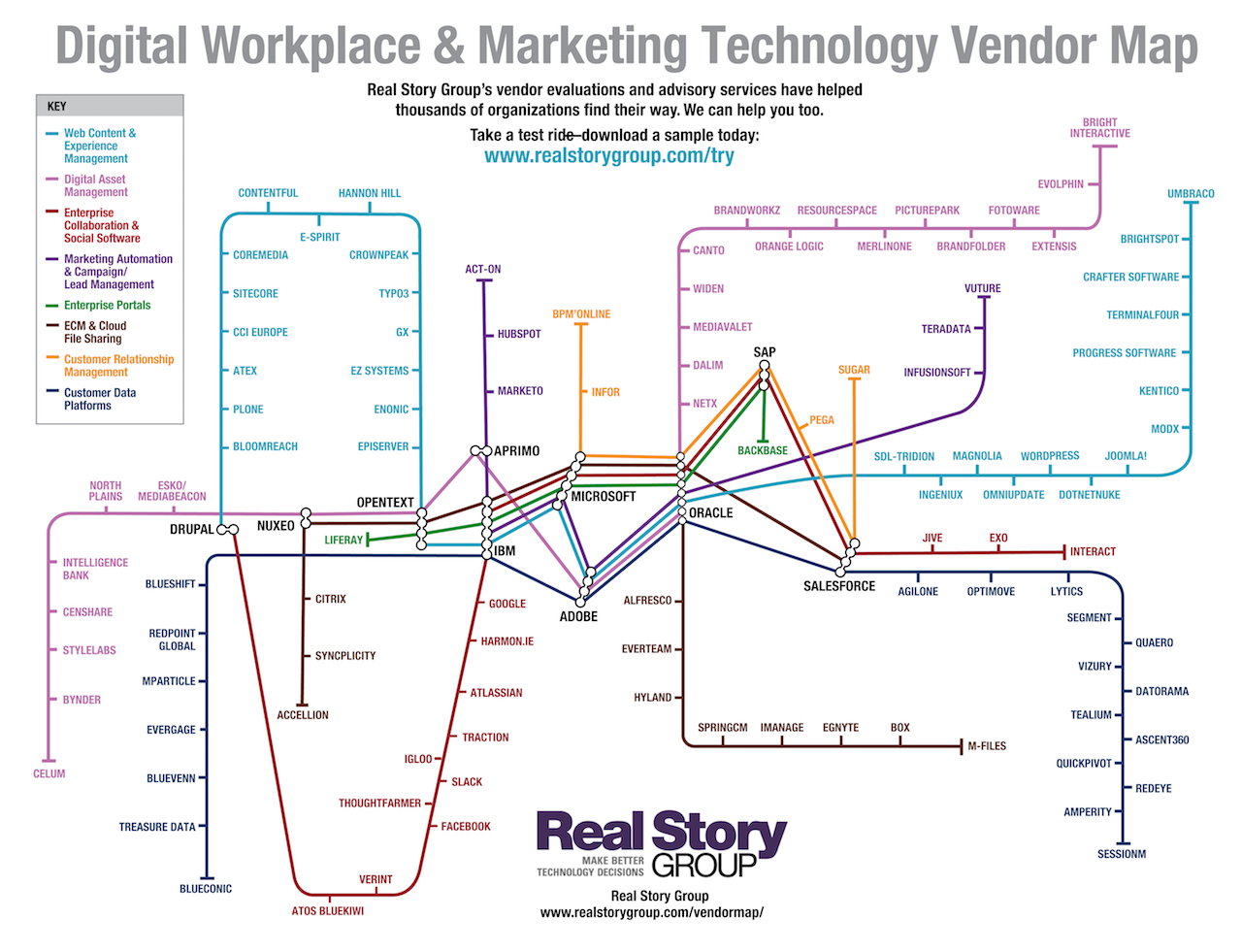

Figure 1: Customers using industry analyst research to inform social-collaboration software selection are more satisfied with their products and vendors. Click the image to enlarge.

Could these results be due to chance? Some basic data science (ANOVA / tests of group means, in case you are interested) indeed confirms that the results are statistically significant -- i.e., not just due to random chance. There you go.

I suspect you intuitively understand the cause and effect here. Expert knowledge about the products/vendors, in-depth understanding about their strengths and weaknesses, the experiences of other customers who used those products -- all get reflected in industry analyst research such as RSG's.

This can help you choose the best-fit software for your requirements and avoid expensive mistakes down the road. Such customers are naturally more satisfied than those who ended with products that don't work as advertised or simply prove to be a mis-match. Time and again, we hear this from our blue-chip customers anecdotally.

Now, there's also quantitative evidence about the impact of analyst research more generally. I'm certainly happy to be part of your success.